AnandTech has been reviewing the AGEIA PhysX cards this month and what they are saying is pretty much what I expected when I wrote a previous post about the PhysX cards.

Playing the CellFactor demo for a while, messing around in the Hangar of Doom, and blowing up things in GRAW and City of Villains is a start, but it is only a start. As we said before, we can’t recommend buying a PPU unless money is no object and the games which do support it are your absolute favorites. Even then, the advantages of owning the hardware are limited and questionable (due to the performance issues we’ve observed).

Seeing City of Villains behave in the same manner as GRAW gives us pause about the capability of near term titles to properly support and implement hardware physics support. The situation is even worse if the issue is not in the software implementation. If spawning lots of effects on the PhysX card makes the system stutter, then it defeats the purpose of having such a card in the first place. If similar effects could be possible on the CPU or GPU with no less of a performance hit, then why spend $300?

Performance is a large issue, and without more tests to really get under the skin of what’s going on, it is very hard for us to know if there is a way to fix it or not. The solution could be as simple as making better use of the hardware while idle, or as complex as redesigning an entire game/physics engine from the ground up to take advantage of the hardware features offered by AGEIA.

…..

As an end user, we would like to say that the promise of upcoming titles is enough. Unfortunately, it is not by a long shot. We still need hard and fast ways to properly compare the same physics algorithm running on a CPU, a GPU, and a PPU — or at the very least, on a (dual/multi-core) CPU and PPU. More titles must actually be released and fully support PhysX hardware in production code. Performance issues must not exist, as stuttering framerates have nothing to do with why people spend thousands of dollars on a gaming rig.

Here’s to hoping everything magically falls into place, and games like CellFactor are much closer than we think. (Hey, even reviewers can dream… right?)

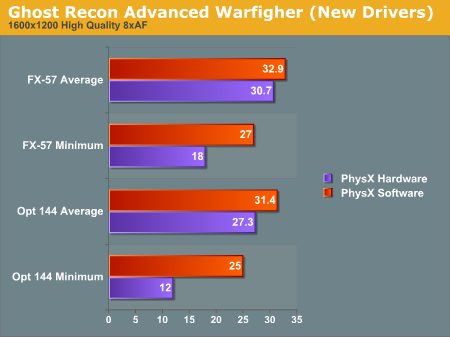

It is pretty bad that performance actually goes down when using the card.

You can read the whole article here.